Background

The academic and scientific community has reacted at pace to gather evidence to inform decision making about COVID-19. We mapped the nature, scope and quality of evidence syntheses on COVID-19 to explore the relationship between review quality and the extent of researcher, policy and media interest. We found low quality reviews being published at pace, often with short publication turnarounds. Despite lacking robust methods, many of these reviews received substantial attention across both academic and public platforms.

Since the emergence of the coronavirus disease 2019 (COVID-19) in December 2019 in Wuhan, China, there has been a proliferation of research related to its epidemiology, diagnosis, treatment, prevention and impact. Making sense of research by bringing together studies in systematic reviews, with or without meta-analysis, is a well-established method in medicine and health research. However, poorly conducted systematic reviews can lead to inaccurate representations of the evidence, inaccurate estimates of treatment effectiveness, misleading conclusions, and reduced applicability, limiting their usefulness and ultimately contributing to research waste.

Aims

Our aims were to map the nature, scope and quality of evidence syntheses on COVID-19 published between December 2019 and July 2020, and, to explore the relationship between review quality and the extent of researcher, policy and media interest to address the following research questions:

1. To what extent are multiple systematic reviews addressing the same research questions being published?

1. What is the methodological and reporting quality of published systematic reviews addressing research questions related to COVID-19?

2. In what ways are established systematic review methods being compromised in an effort to inform transmission, diagnosis, treatment and care of people with COVID-19? And what is the potential impact of these methodological short cuts?

3. To what extent have published systematic reviews addressing COVID-19 research questions received attention from other researchers, policy makers and the media and what is its relationship with the methodological and reporting quality of published systematic reviews?

Activity

We conducted a systematic review that included systematic reviews, rapid reviews, overviews and qualitative evidence syntheses addressing research questions relating to COVID-19 and published between December 2019 and June 2020.

Our results show that in the rush to get evidence, there was a significant output of low quality systematic reviews missing cornerstones of best practice. Only a third met the definition of a systematic review according to established criteria and the majority of these had critical flaws.

For example, only a third reported registering a protocol, and less than one in five searched named COVID-19 databases. There was also considerable overlap in the topics of reviews, some with discordant findings. Review conduct and publication was rapid, with many reported as being conducted within three weeks and published within three weeks of submission.

Of more concern, perhaps, was the attention these reviews received as measured through the metrics of citation and media attention.

The pandemic has highlighted the importance of ensuring rigour in systematic review methods and reporting if these efforts are to be helpful to decision makers. The responsibility for this must fall to us all – researchers, funders, peer reviewers and editors.

What we did next

In order to propose appropriate approaches to the issues highlighted above, we need to understand whether researchers are learning from the available evidence as it accumulates. We therefore set out to map the extent and nature of systematic reviews addressing one topic as an exemplar: the effectiveness of convalescent plasma therapy for COVID-19. By exploring the timelines, characteristics and reported methods in this body of evidence, we hoped to be able to offer potential solutions to avoid similar research waste in the future.

We found 51 systematic reviews that attempted to answer this question. We identified several key issues: proliferation and inconsistency of reviews, poor quality, and overlap with but not learning from existing research. From this we proposed ways in which the breadth of the research community can help to prevent infodemics in the future.

Conclusions

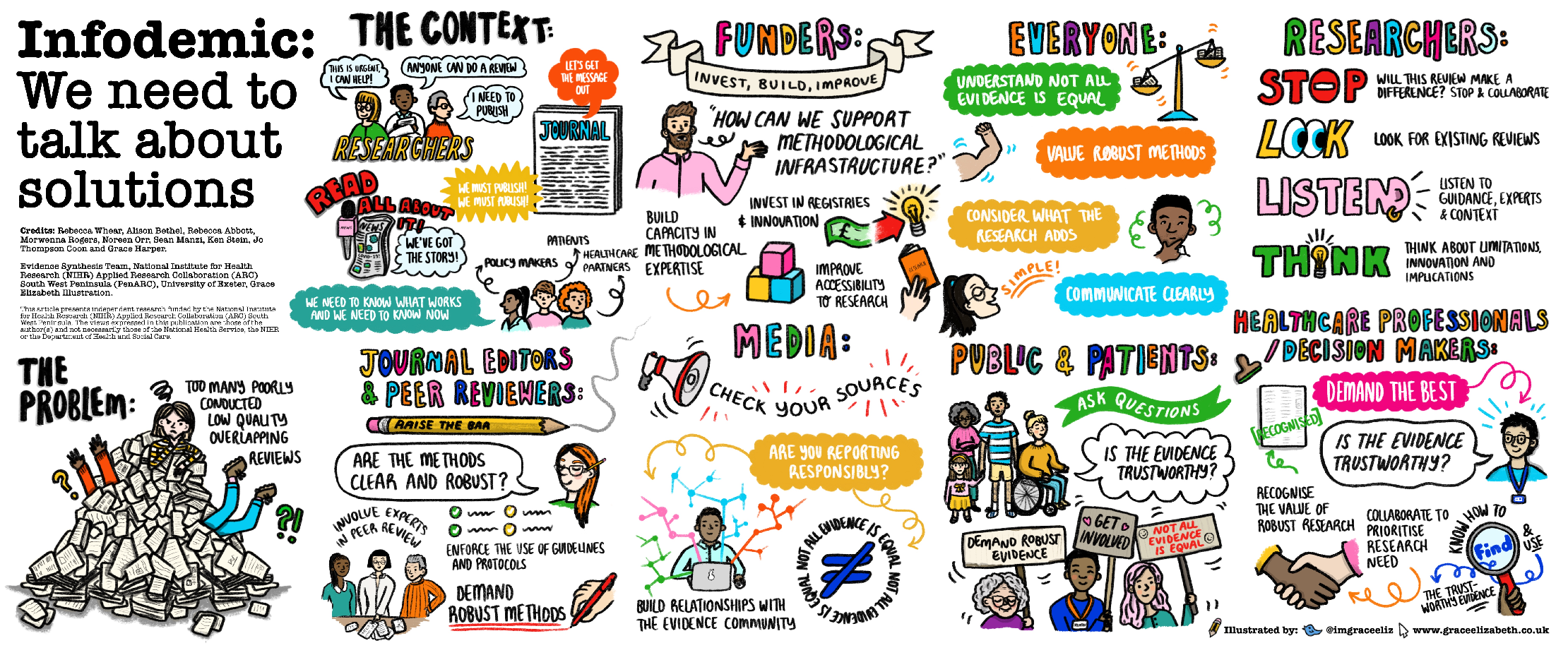

Researchers need to conduct, appraise, interpret and disseminate systematic reviews better. The research community (researchers, peer-reviewers, journal editors, funders, decision makers, clinicians, journalists and the public) need to work together to facilitate the conduct of robust systematic reviews that are published and communicated in a timely manner, therefore reducing research duplication and waste, increasing transparency and accessibility of all systematic reviews.

Read the research paper:

Download the Infodemic: We need to talk about solutions poster

Related publications

Systematic reviews of convalescent plasma in COVID-19 continue to be poorly conducted and reported: a systematic review

Download the Paper

OP31 A snapshot of the characteristics, quality and volume of the COVID-19 evidence synthesis infodemic: systematic review*

Download the Paper

Characteristics, quality and volume of the first 5 months of the COVID-19 evidence synthesis infodemic: a meta-research study

Download the PaperResources

- Blog: BMJ EBM Spotlight Navigating the COVID-19 infodemic – can we do better?

- Protocol: PROSPERO Characteristics, quality and volume of the evidence synthesis infodemic – a living systematic review

- Poster: We need to talk about solutions looking through the lens of evidence syntheses of convalescent plasma therapy: a systematic review

ARC South West Staff

Professor Jo Thompson-Coon

Professor of Evidence Synthesis and Health Policy

Morwenna Rogers

Information Specialist

Alison Bethel

Information Specialist

Rebecca Whear

Senior Research Fellow